The easiest way to backup linux machines is to tar up the data, compress it and store it remotely. So it is important to know and understand the options for the long term storage of such data.

Most of the linux compression analysis on the web are a complete data overload with pages and pages of data dumps and analysis. The idea of this article is to keep it simple for the end user, just enough data to understand what is different so that you can walk off knowing what will work for you.

Quick Note on Lossy and Lossless Compression

Lossy compression is used in music and images where a deviation of a few bytes during the decompression does not make a huge difference. The artifacts may be unnoticeable to the user, but the uncompressed data will definitely deviate from the original. The higher the compression ratio, the more the compression will start to affect significant portions of the data. That is not the focus of this article.

We are focusing on Lossless compression, the kind of compression where the data can be recovered precisely to its original form. We analyze the options available to do the same and the trade off’s in terms of Storage Size, Time and Memory used.

The Compression Options in Linux

GZip

The gzip tool is still considered as the classical method of compressing data on any Linux machine. The tool is rock solid since it has been around since the early 90’s. Gzip uses the Lempel-Ziv coding algorithm with the extension .gz. One advantage of .gz is that it can compress a stream, a sequence where you can’t look behind. This makes it the official compressor of http streams.

gzip is built for speed and can both compress at a much faster rate than most other tools. It is also very resource efficient in terms of memory usage during compression and decompression and does not require much memory.

Compatibility is its biggest advantage. Since gzip is such an old and stable tool, most Linux systems will have the tool available to un-compress the same data.

bZip2

bzip2 features a high rate of compression together with reasonably fast speed. It primarily uses a implementation of the “Burrows-Wheeler algorithm” and this makes it very different from gzip. Each file is replaced by one with the extension .bz2.

The bzip2 tools can create significantly more compact files than gzip, but take much longer to achieve those results due to the fact that Bzip2 uses several layers of compression techniques stacked on top of each other. It also uses more memory.

A interesting facet of bzip2 is is that its performance is asymmetric, the decompression is relatively faster as compared to compression.

xz

The xz compression utilities leverage a compression algorithm known as LZMA2. This algorithm has a greater compression ratio than bzip2 and gzip, making it a perfect option when you need to store data for longer periods and have limited disk space.

While the compressed files that xz produces are smaller than the other utilities, it takes many order of times longer to do the compression. The xz tools also use a lot of memory, again many orders of magnitude over the other two methods. If you are on 512mb VPS, this might be a big problem.

Like bz2, decompression time with xz is relatively good. xz is is usually significantly faster at decompressing and the memory usage is also not as high.

Kick Off

Let us compress a sample tarball that has been created from the bare wordpress installation. This is a good representative sample of typical data on linux machines.

We will try to measure both the compression achieved and the time taken.

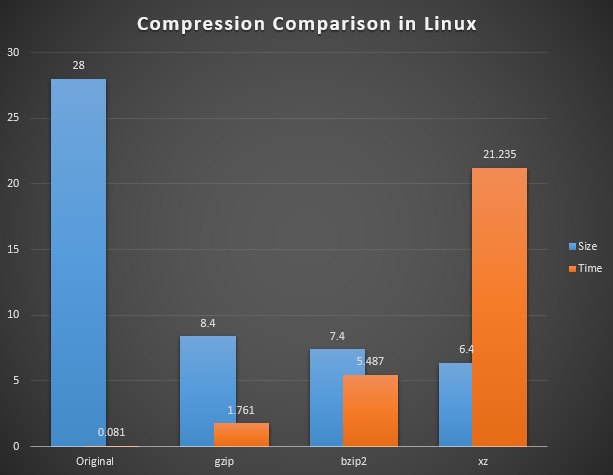

The options throws up some simple, but interesting data

The data is interesting because the oldest gzip tool got the data down to 30% of the original size in almost no time. The more efficient bzip2 compressed the data to 26% of the original size, but took almost 20 times longer. And XZ was terrific in compressing the data down to just 22%, but took over 250 times longer than gzip.

Clearly from above, if time taken and memory used by the process is not a issue, then XZ is the clear winner in terms of simply the compression ratio.

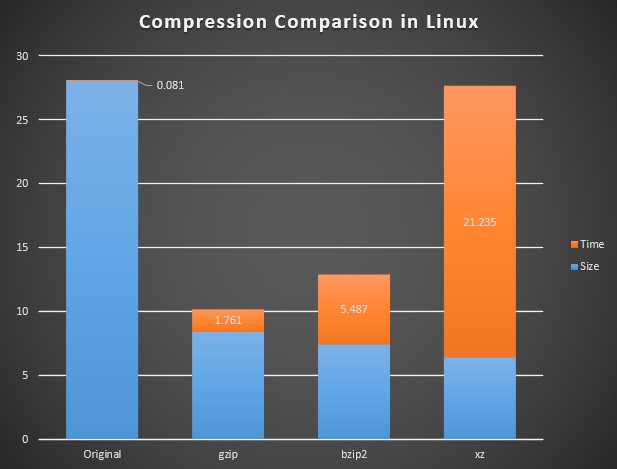

Let us look at the same data in a different way

If you do not care for how long it takes to compress data, then you dont need to bother with this second analysis. But if Size and Time are equally important to you, you will notice that the first option of uncompressed size + time is almost the same as the last option of hugely compressed size + time. In effect, if one considers time as a equally important factor, then the size reduction from 26% to 22% at the cost of increasing time by a equally demandingfactor does not look very inviting.

Also, I have not shown the CPU usage in each of the steps above, but in reality, each of the options above use up almost the 100% CPU when they are compressing data. This might not be a acceptable situation if you are running your own webserver and cant hold up the CPU for too long.

My takeaway from this is that bzip2 is still the clear winner in terms of finding a good balance between compression ratio and time taken. It compresses data quickly so that your CPU is not held up and uses very little memory.

Every situation is different and your use case might not be so simple. So use your judgement to figure out what works for you and use the right tools.

Recent Comments